My Stack: Tools, Frameworks, and Python Packages I Use for AI and ML 💻

Building an AI startup in 2025 means making sharp choices with your tech stack. Every tool and service needs to be lightweight, scalable, and developer-friendly—especially when you’re running lean.

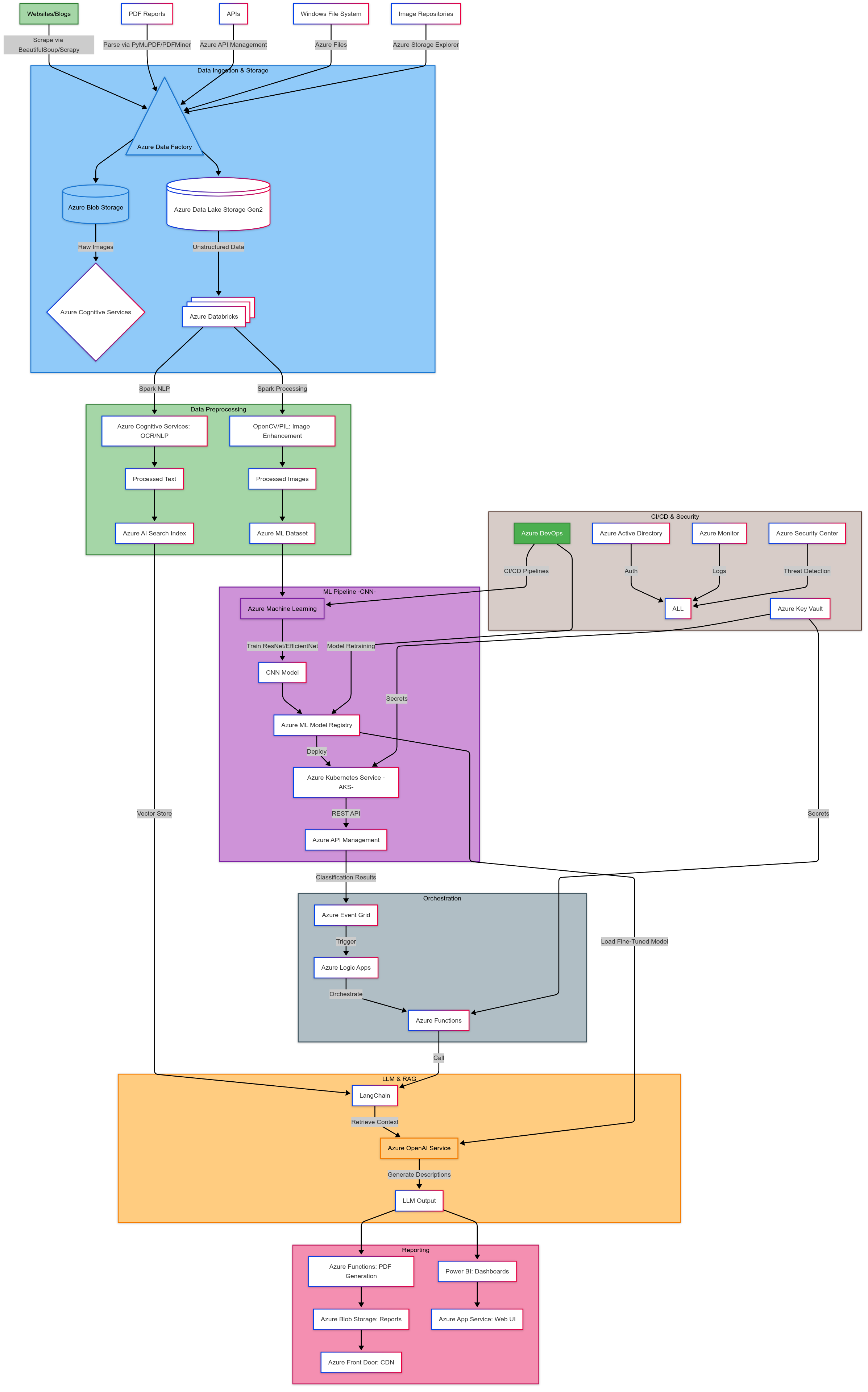

In this post, I'm breaking down my core ML stack, powered by Azure Cloud Services and Mask R-CNN as my primary model architecture.

☁️ Why Azure?

Azure offers a tightly integrated ecosystem for ML workflows—from data storage and orchestration to model training, deployment, and inference. I chose Azure for its maturity, support for Kubernetes, and integration with enterprise services like Azure Active Directory, Key Vault, and Azure DevOps.

🧠 Overview of My Stack

- Language: Python 3.11+

- Core ML Framework: PyTorch

- Main Model: Mask R-CNN (via Detectron2)

- Labeling Tools: Roboflow, CVAT

- Training Pipeline: Azure Databricks + Azure ML

- Storage: Azure Blob Storage, Azure Data Lake Gen2

- Model Serving: Azure Kubernetes Service (AKS) + Azure ML Model Registry

- API Gateway: Azure API Management

- Trigger + Orchestration: Azure Functions, Logic Apps, Event Grid

- LLM Integration: Azure OpenAI + LangChain

- Reporting: Azure App Service, PDF via Azure Functions, Power BI Dashboards

🧱 Core Tools & Libraries

PyTorch– base frameworkDetectron2– for Mask R-CNN and vision modelsscikit-learn– evaluation metricsalbumentations– for image augmentations-

torchvision,openCV,matplotlib,pycocotools

📦 Azure-Specific Components

Azure Blob Storage + Data Lake

Used to store and organize both raw and preprocessed image data. Perfect for managing large volumes of labeled datasets and experiment outputs.

Azure Databricks

I use this for Spark-based ETL and preprocessing, especially for preparing training datasets at scale.

Azure Machine Learning & Model Registry

My training jobs and experiments run here. Once models are validated, they're registered and deployed using AKS for scalable inference.

Azure Kubernetes Service + API Management

Trained models are containerized and served through AKS, exposed via a REST API, and managed securely with Azure API Management.

Azure Functions + Logic Apps + Event Grid

These handle triggers, automation, and orchestration—such as post-inference workflows or LLM Q&A invocations.

Azure OpenAI + LangChain

I integrate LLMs for post-classification reasoning, auto-report generation, and insights by connecting LangChain with Azure OpenAI endpoints.

Reporting & Output

All generated content flows into Azure App Services (for UI), Power BI dashboards, or is exported to PDFs via serverless Azure Functions.

💬 Final Thoughts

This stack reflects what I believe every AI founder should aim for: speed, modularity, and production readiness.

No hype tools, no overkill infrastructure—just the right services to build, learn, and iterate quickly in production-like environments.

Coming soon: A deep dive into how I annotate data for Mask R-CNN, and my automated training loop using Azure ML pipelines.

Questions or want a code snippet? Let’s connect.